I’ve started uploading and documenting some Pure Data patches which I’ve used for generating Earcons and Tactons – hopefully they’ll be useful to someone. Check them out here.

Tag: pure data

Earcons and Tactons in Pure Data

In recent projects I’ve been using Pure Data for generating Earcons and Tactons; I’ve written more about generating Tactons with Pure Data here. I’m sharing these here in the hope that they’ll be useful to someone – if you use or adapt them, I’d be very interested to hear what cool things you’re doing with them!

Everything is on github: https://github.com/efreeman/pd-earcons-tactons

Tactons for Selection Gestures

Source here. This patch generates the tactile feedback I used in the above-device tactile feedback project. Feedback was generated in Pure Data using commands sent over a TCP socket (localhost:34567; change port number in network-listener sub-patch). Looking into the network-listener patch shows the message protocol:

- y: Enable output

- z: Disable output

- on: Currently does nothing (unused outlet)

- off: Stops all output in progress

- ramp_a: Ramp amplitude from 0 to 1, at 150 Hz over 1000 ms

- ramp_a_exp: As above, with exponential increase rather than linear

- ramp_f: Ramp frequency from 0 to 150 Hz over 1000 ms

- ramp_rough: Modulate a 150 Hz wave with a wave whose frequency changes from 0 Hz to 75 Hz over 1000 ms

- const: Produce a 150 Hz wave for 1000 ms

- pulse: Produce a 150 Hz wave for 200 ms

We used 150 Hz for all sine waves as this was the resonant frequency of our actuator; change values as needed. Duration can be altered from 1000 ms too.

This is essentially a dumb patch which generates sound on demand. It’s easier to have greater control over feedback in a more expressive programming language. Using sockets is a nice low-cost way of communicating between Pure Data and other applications.

Single Tone Generator

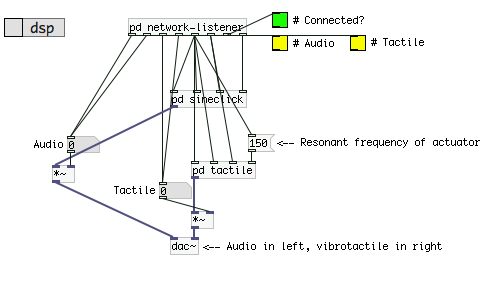

Source here. This patch generates an enveloped tone using parameters sent over a socket. As in my other patches, the TCP socket is on localhost port 34567; this can be changed in the network-listener sub-patch. Here I’ve used the left and right channel for different types of tone: audio in the left, vibrotactile in the right. The right channel is at a fixed frequency to drive an actuator, whereas the left channel can vary tone frequency. Audio and tactile feedback can be enabled/disabled on demand. Message protocol:

- a0: Audio off

- a1: Audio on

- t0: Tactile off

- t1: Tactile on

- <attack> <decay> <freq>: Enveloped tone

Tone generation uses three parameters: attack time (in ms), decay time (in ms) and oscillating frequency (in Hz). Attack time specifies how long the tone takes to reach full amplitude. Decay time specifies how long the tone takes to return to zero amplitude. The tone is also sustained at full amplitude for the decay duration. Frequency specifies the audio frequency but not the tactile frequency, which is fixed.

As an example, “100 500 440” produces a 1100 ms-long tone at 440 Hz; it takes 100 ms to reach full amplitude, is sustained for 500 ms and then returns to zero amplitude after a further 500 ms. If you wish to further specify the sustain time, change the arguments passed into vline~ in the sineclick sub-patch.

This can be used to create a wide variety of sound effects with corresponding tactile patterns. Short envelope times result in “click” sounds, whereas longer envelope times result in much softer tones. Generate rhythmic patterns using more expressive programming environments, as trying to do this dynamically in pure data would be cumbersome.

Network Messages in Pure Data

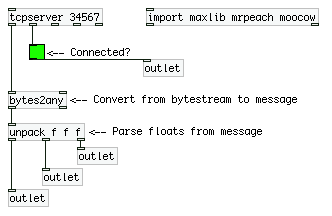

Pure Data is great for generating sound and is used quite often in HCI for this reason. I’ve previously written about how it can be used for creating tactons as well. This post shows a simple patch which receives messages from a local socket and parses the input. As a Pure Data newbie I found documentation to be pretty poor so I’m hoping this helps others see how to easily integrate pd~ with other programs using sockets.

Source: networklistener.pd

First the tcpserver object creates a TCP server which listens on the given port number (34567 here). The first outlet has incoming messages; the second has connection status. The bytes2any object takes a bytestream and creates a message from it. As an example of how to parse information from these messages, the unpack object here parses three floats from messages. This patch has four outlets: the first three are the parsed floats, the fourth is connection status (True when a socket is connected to the server).

Creating Tactons in Pure Data

What are Tactons?

Tactons are “structured tactile messages” for communicating non-visual information. Structured patterns of vibration can be used to encode information, for example a quick buzz to tell me that I have a new email or a longer vibration to let me know that I have an incoming phone call.

Vibrotactile actuators are often used in HCI research to deliver Tactons as these provide a higher fidelity of feedback than the simple rotation motors used in mobile phones and videogame controllers. Sophisticated actuators allow us to change more vibrotactile parameters, providing more potential dimensions for Tacton design. Whereas my previous example used the duration of vibration to encode information (short = email, long = phone call), further information could also be encoded using a different vibrotactile parameter. Changing the “roughness” of the feedback could be used to indicate how important an email or phone call is, for example.

How do we create Tactons?

Now that we know what Tactons are and what they could be used for, how do we actually create them? How can we drive a vibrotactile actuator to produce different tactile sensations?

Linear and voice-coil actuators can be driven by providing a voltage but, rather than dabble in electronics, the HCI community typically uses audio signals to drive the actuator. A sine wave, for example, produces a smooth and continuous-feeling sensation. For more information on how audio signal parameters can be used to create different vibrotactile sensations, see [1], [2] and [3].

Tactons can be created statically using an audio synthesiser or a sound editing program like Audacity to generate sine waves, or can be created dynamically using Pure Data. The rest of this post is going to be a quick summary of Pure Data components which can be used in creating vibrotactile feedback in real-time. I’ve just provided an overview of the key components which I use when creating tactile feedback. With the components discussed, the following vibrotactile parameters can be manipulated: frequency, spatial location, amplitude, “roughness” (with amplitude modulation) and duration.

Tactons with Pure Data components

osc~ Generates a sine-wave. First inlet or argument can be used to set the frequency of the sine-wave, e.g. osc~ 250 creates a 250 Hz signal.

dac~ Audio output. First argument specifies the number of channels and each inlet is used to send an incoming signal to that channel, e.g. dac~ 4 creates a four-channel audio output. Driving different actuators with different audio channels can allow vibration to be encoded spatially.

*~ Multiply signal. Multiplies two signals to produce a single signal. Amplitude modulation (see [2] and [3] above) can be used to create different textures by multiplying two sine waves together. Multiplying osc~ 250 with osc~ 30 creates quite a “rough” feeling texture. This can also be used to change the amplitude of a signal. Multiplying by 0 silences the signal. Multiplying by 0.5 reduces amplitude by 50%. Tactons can be turned on and off by multiplying the wave by 1 and 0, respectively.

delay Sends a bang after a delay. This can be used to provide precise timings for tacton design. To play a 300 ms vibration, for example, an incoming bang could send 1 to the hot inlet of *~, enabling the tacton. Sending that same bang to delay 300 would send a bang after 300 ms, which could then send 0 to the cold inlet of *~, ending the tacton.

phasor~ Creates a ramping waveform. Can be used to create sawtooth waves. This tutorial explains how this component can also be used to create square waveforms.